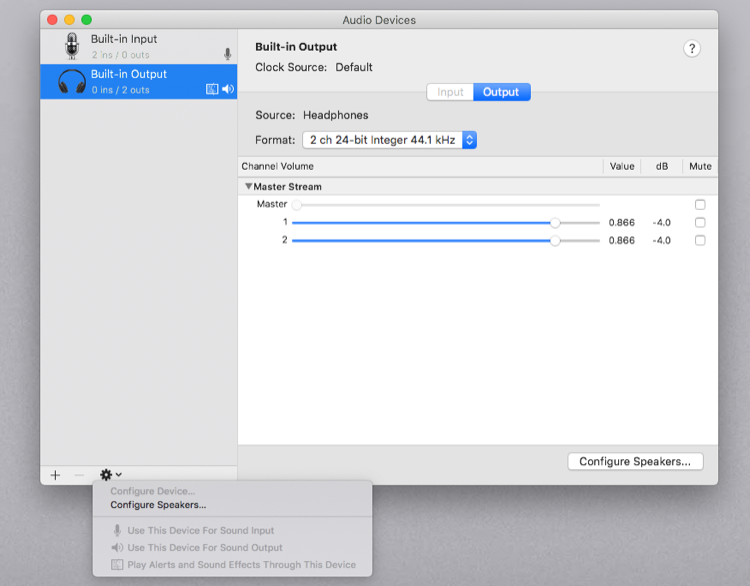

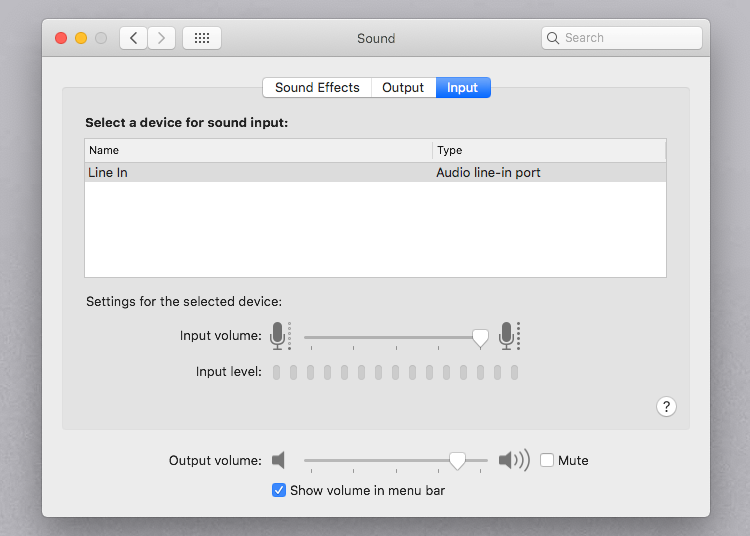

In one crazy example, you have to configure a microphone unit by disabling its output bus 0, and enabling its input bus 1, but then read audio data from its ostensibly disabled output bus 0. Furthermore, the definition of what constitutes an input or output is interpreted quite differently in different places: One place calls a microphone an input, since it records audio another place will call it an output, since it outputs audio data to the system. Some invalid combinations return error codes, while others only lead to errors during playback/recording. This is surprisingly hard, since every property can be configured for every bus (sometimes called element) of every input or output of every unit, and the documentation is extremely terse on which of these combinations are valid.

The next step is then to configure the audio unit using AudioUnitGetProperty and AudioUnitSetProperty. With the description, you can create an AudioComponentInstance, which is then an opaque struct pointer to your newly created audio unit. In order to use an audio unit, you create an AudioComponentDescription that describes whether you want a source or sink unit, or an effect unit, and what kind of effect you want (AudioComponent is an alternative name for audio unit). Furthermore, every audio unit has several properties, such as a sample rate, block sizes, and a data format, and parameters, which are like properties, but presumably different in some undocumented way.

The meaning of these buses varies wildly and is often underdocumented. Each audio unit can have several input buses, and several output buses, each of which can have several channels. An audio unit can be a source (aka microphone), a sink (aka speaker) or an audio processor (both sink and source). The basic unit of any CoreAudio program is the audio unit.

The main problem is lack of documentation and lack of feedback, and plain missing or broken features. After having used the native audio APIs on all three platforms, CoreAudio was by far the hardest one to use. It is known for its high performance, low latency, and horrible documentation. To avoid confusion with the macOS API of the same name, I will always to refer to it as WASAPI.ĬoreAudio is the native audio library for macOS.

#Wine mac core audio not working windows#

: WASAPI is part of the Windows Core Audio APIs. All connected sound cards should be listable and selectable, with correct detection of the system default sound card (a feature that is very unreliable in PortAudio). For reference, the singular use case in PythonAudio is playing/recording of short blocks of float data at arbitrary sampling rates and block sizes.

#Wine mac core audio not working how to#

This series of blog posts summarizes my experiences with these three APIs and outlines the basic structure of how to use them. This effort resulted in PythonAudio, a new pure-Python package that uses CFFI to talk to PulseAudio on Linux, Core Audio on macOS, and WASAPI on Windows. Instead of relying on PortAudio, I would have to use the native audio APIs of the three major platforms directly, and implement a simple, cross-platform, high-level, NumPy-aware Python API myself. However, I then realized that PortAudio itself had some inherent problems that a wrapper would not be able to solve, and a truly great solution would need to do it the hard way: Thus, I set out to write PySoundCard, which is a higher-level wrapper for PortAudio that tries to be more pythonic and uses NumPy arrays instead of untyped bytes buffers for audio data. However, I soon realized that PyAudio mirrors PortAudio a bit too closely for comfort. My first step to improve this situation were a small contribution to PyAudio, a CPython extension that exposes the C library PortAudio to Python. It has long been a major frustration for my work that Python does not have a great package for playing and recording audio. This first part is about Core Audio on macOS. This is part one of a three-part series on the native audio APIs for Windows, Linux, and macOS.